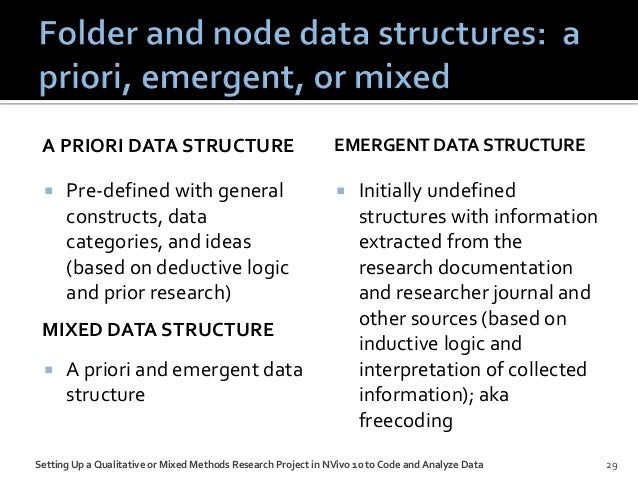

As I revised my codes, each transcript was re-read and re-coded. Throughout this stage, codes were revised or removed and additional codes and subcodes were created as new themes emerged from the data (inductive approach). I listed these codes onto a coding framework with clear operational definitions so I had a clear understanding of what type of data needed to be assigned to each code. This process took quite a bit of time, but if not done thoroughly, I can see how this could have caused me many problems later on.ġst level coding: I developed a starting coding list based on my theoretical framework and wider literature to get me started (initial deductive approach). This involved ensuring consistent format and style and anonymising my participants by allocating each of them a pseudonym and a code to differentiate public, healthcare and media professionals (a blog post about this here). I prepared transcripts for importing into NVivo 10. After all that, I was pretty sure I had immersed myself in my data (even though I hated listening to myself!). This time I scribbled notes down on a pad and drew various mind maps and diagrams. Then I listened to each audio re-coding again whilst reading my transcripts. Then I read each transcript (reading only ~ no note taking). I listened to each audio recording (listening only ~ no note taking). This was a very, very long and at times, laborious task, but again highly valuable for really getting to know my data. I also transcribed verbatim all my focus groups and interviews myself as soon as I could after data collection. I have repeatedly referred back to my contact summary forms throughout this process (if anyone wants the template, just ask). This is a very simple and highly valuable thing to do. From these notes I created a contact summary form as advocated by Miles and Huberman and one of my supervisors which synthesised all this information. So immediately following my focus groups and interviews, I took extensive notes about salient factors (more about that in another blog post). This used to be called data reduction (Miles and Huberman 1994) but it was changed because data reduction implies “ weakening or losing something in the process”. The purpose of this post is to share with your my first step of data analysis – data condensation. There are lots of similarities in this edition but also some differences. I am following Miles and Huberman’s approach to data analysis and have the 3rd edition ~ Miles et al., (2014). Knowing what I know now, I am so thankful I spent the time doing that! My last blog post was about developing my analysis plan. Rather than trying to squeeze my thoughts and experience of all my data analysis in one blog post, I intend to write shorter (!) posts of different stages as I progress.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed